You would expect a scientist like me to be defensive and offer an enthusiastic “yes” to the question of whether investment in research always pays off. However, my answer is a “qualified no,” with a few rare exceptions. Research funding is not unlike food production; it is not the amount, but the distribution of research funds that matters. Government funding for research, in particular, has become more and more dependent on project specifications designed by lawmakers and their staff and the interpretation of these specifications by government employees, who are usually quite removed from scholarly activity. Of course, there are panels of experts that assess the applications. Because these panels are composed of people who like to see funding in their own areas of interest, and since they prefer projects with predictable outcomes, they are usually unlikely to select an application proposing to explore uncharted waters. Such a system is averse to high-risk, out-of-the-box proposals. However, history has shown that it is often precisely this type of research that leads to true innovation.

Let us examine this a little bit further! There was no question that the human genome project was a worthy and excellent proposal and that the National Institutes of Health was the appropriate U.S. agency to oversee the country’s participating laboratories. In scope and complexity, this project was an endeavor not unlike that of putting a man on the moon. As a founding member of the Human Genome Study Section in 1989, I can say that initially we received many applications proposing alternative methods for physical mapping and DNA sequencing. Interestingly, however, the method for whole genome sequencing that produced the human genome sequence announced by President Clinton at the White House in 20001 already had been published in 1981.2 The only new elements were to enhance the throughput of this “shotgun DNA sequencing” method by deploying robotics and advances in computing hardware, which were mainly financed by the private sector. The shotgun sequencing method itself, however, which by that time already had produced the first complete genome sequence, was supported by a relatively modest grant from the U.S. Department of Agriculture’s (USDA) Competitive Grants Program.3

There was something impressive about this USDA program: 1) my application was essentially high risk because nothing similar to this new method had ever been contemplated; 2) the amount of money, $50,000, was very small compared to the later NIH funding; and 3) the government program was run by a prominent plant geneticist on a one-year leave from a university rather than by a government employee, and he had the vision that funding such a project might have a disproportionate impact.

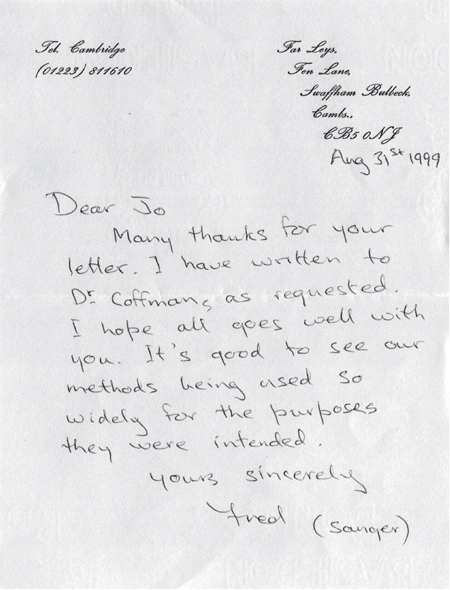

It is important to note that a consideration of expected impact can render the publication process superficial. Peter Czernilofsky, who sequenced the first retroviral oncogene4 that provided a basis for the work leading to Harold Varmus’s and Michael Bishop’s Nobel Prize in Physiology and Medicine in 1989, suggested to me that I should ask Bishop to communicate my manuscript to the Proceedings of the National Academy of Sciences, but a reviewer persuaded Bishop that it was too trivial an improvement and not worthy of being published in the Proceedings. Thus, the review of grant applications and manuscripts contributed by outsiders may well hinder innovation. When the work was eventually published, in Nucleic Acid Research in 1981, it laid the foundation for a citation record5 and Fred Sanger, winner of two Nobel Prizes, later, in a hand-written note, acknowledged “shotgun sequencing” to be synergistic to his own “Sanger sequencing” method (see Figure 1). As illustrated by this example, outsiders can still be successful, even with limited resources, and eventually overcome the bias of the establishment.

There is always a race between new methods and discoveries. While most research funding today is directed to what is seen as fashionable by non-scientists, like politicians and administrators, discoveries are serendipitous. Perhaps more importantly, they are often made by individuals, not by teams or large programs. I had conceived the shotgun sequencing method in 1974, at an EMBO workshop on restriction enzymes and DNA sequencing in Belgium, and reduced it to practice in 1980 at UC Davis using the small USDA grant I mentioned above. I worked mostly by myself, with only a few volunteers. As a non-faculty member, I did not even have my own laboratory.

Another example that illustrates the power of individual vision and endeavor in science is the work of Selman Waksman, a soil bacteriologist who was the founder of my Institute at Rutgers University. Waksman’s laboratory developed a cross streak test to screen soil samples for bacteria, which secrete toxins that kill other bacteria.6 This was done before NIH and NSF funding became available. I would venture to say that even with NIH in existence, this type of research likely would have been regarded, at the time, as not relevant for medicine. Remarkably, however, it led to the discovery, by Waksman’s student Albert Schatz, of a bacterium that produces streptomycin, which became the first cure against tuberculosis. The discovery, and its reduction to practice, was recognized by the 1952 Nobel Prize in Physiology and Medicine awarded to Waksman.

These examples illustrate that innovation requires a certain level of risk-taking and a sustained commitment to invest in individuals. To illustrate the success of this principle of guiding research funding, I would like to point to three examples: the Kaiser Wilhelm Gesellschaft, today the Max Planck Gesellschaft, which operates many institutes in Germany; the former Bell Laboratories; and the Carnegie Institution for Science.

The concept of the Kaiser Wilhelm Gesellschaft was to appoint outstanding scientists and provide them with a general research budget to work on whatever they chose. During the first decades of the twentieth century, the staff included legendary scientists such as Max Planck, Albert Einstein, Otto Hahn, and Werner Heisenberg. It later included several other famous chemists, such as Feodor Lynen, who co-discovered the regulation of cholesterol and whom I was fortunate to have on my thesis committee. In total, these scientists won an impressive 33 Nobel Prizes for their path-breaking discoveries. Continuing this tradition of investing in individuals and their vision, each Max Planck Institute lab director today still receives a certain level of basic funding for scientists, technicians, supplies, and equipment, although many research projects also require supplemental funding from competitive grants programs, which are also restricted to specific subjects.

|

| Figure 1 |

True independence from outside funding for basic research was possible at Bell Laboratories, the R&D organization initially of the Bell Telephone Company, and later of AT&T. The organizing principle at Bell Labs was to allow a carefully selected group of research scientists to work mostly independently, within the larger context of the organization, with very limited support staff, and to pursue their own research programs in materials science, chemistry, physics, mathematics, and computer science.

Bell Labs produced thirteen Nobel Laureates, including many in physics: Clinton J. Davidson in 1937 for characterizing matter; John Bardeen, Walter Brattain, and William Shockley in 1956 for inventing the transistor; Philip Anderson in 1977 for characterizing the electronic structure of glasses and magnetic materials; Arno Penzias and Robert Wilson in 1978 for discovering cosmic background radiation; Steven Chu in 1997 for new methods to study atoms with laser light; Horst Störmer and Daniel Tsui in 1998 for the discovery of the fractional quantum Hall effect; and William Boyle and George Smith in 2009 for inventing CCD sensors. Eric Betzig broke the stream of physics prizes with his 2014 Nobel Prize in Chemistry for near-field optical microscopy. In addition, scientists and engineers at Bell Labs have had a major impact on the development of communications technology, including the development of the ion laser by Eugene Gordon and others; and on computation, including the seminal contribution to information theory by Claude Shannon, the creation of new programming languages like C by Dennis Ritchie and Brian Kernighan and C++ by Bjarne Stroustrup, and the introduction of the revolutionary UNIX operating system by Ken Thompson and Dennis Ritchie. These largely individual endeavors have enabled the development of new technologies and the creation of entire new industries that have transformed society.

The Carnegie Institution for Science supported Barbara McClintock, who never applied for federal funds, did not have many assistants over the course of her career, and did not like to publish peer-reviewed articles. Yet she won the Nobel Prize in Physiology and Medicine in 1983 for her discovery of transposable elements. She told me that once when a manuscript she had submitted for publication was rejected, she decided not to bother with publications in the future. Instead she published her results in the Carnegie Institution’s Yearbook, a decision that may have saved her much time and aggravation. She was not the only Nobel Laureate affiliated with the Carnegie Institution: others laureates include Alfred Hershey, who won the 1969 prize in Physiology and Medicine for discovering DNA as the basis for genetics, and Andrew Fire, who won the 2006 prize in Physiology and Medicine for RNA interference.

These examples, covering many fields of science and engineering over a period of a hundred years or more, illustrate the following key point: discoveries leading to true innovation, often serendipitous, reflect the vision of individuals. Neither government nor philanthropic organizations will be able to consistently make the right prediction as to where to direct research support for maximum impact. Instead, a hybrid system, encouraging and enabling both directed and free research, may offer a reasonable solution, which may well be more economical than today’s preference for funding large programs, consortia, and institutions. Thus, public universities in the United States have recognized that establishing endowed chairs, while not covering the holder’s salary, does provide a base-level of independent funding to pursue risky ideas, as in today’s Max Planck Institutes, and may offer an alternative to conventional research programs.

Joachim Messing is Director of the Waksman Institute of Microbiology; University Professor of Molecular Biology; and Selman A. Waksman Chair in Molecular Genetics at Rutgers, The State University of New Jersey. He was elected a Fellow of the American Academy of Arts and Sciences in 2016.

© 2017 by Joachim Messing

ACKNOWLEDGMENTS

I would like to thank Michael Seul, a former member of the technical staff at Bell Labs, for reviewing this essay.

ENDNOTES

1. J. C. Venter et al., “The Sequence of the Human Genome,” Science 291 (5507) (2001): 1304–1351; E. S. Lander et al., “Initial Sequencing and Analysis of the Human Genome,” Nature 409 (6822) (2001): 860–921.

2. J. Messing, R. Crea, and P. H. Seeburg, “A System for Shotgun DNA Sequencing,” Nucleic Acids Research 9 (2) (1981): 309 – 321.

3. R. C. Gardner et al., “The Complete Nucleotide Sequence of an Infectious Clone of Cauliflower Mosaic Virus by M13mp7 Shotgun Sequencing,” Nucleic Acids Research 9 (12) (1981): 2871–2888.

4. A. P. Czernilofsky et al., “Nucleotide Sequence of an Avian Sarcoma Virus Oncogene (src) and Proposed Amino Acid Sequence for Gene Product,” Nature 287 (5779) (1980): 198–203.

5. D. Pendlebury, “Science Leaders – Researchers to Watch in the Next Decade,” The Scientist 4 (11) (1990): 18–19.

6. S. A. Waksman, “Streptomycin: Background, Isolation, Properties, and Utilization,” Science 118 (3062) (1953): 259–266.