Chapter 4: Reversing the Null: Regulation, Deregulation, and the Power of Ideas

*David A. Moss

It has been said that deregulation was an important source of the recent financial crisis.1 It may be more accurate, however, to say that a deregulatory mind-set was an important source of the crisis—a mindset that, to a very significant extent, grew out of profound changes in academic thinking about the role of government.

The influence of academic ideas in shaping public policy is often underestimated. John Maynard Keynes famously declared that the “ideas of economists and political philosophers, both when they are right and when they are wrong, are more powerful than is commonly understood. Indeed, the world is ruled by little else.”2 Although perhaps exaggerated, Keynes’s dictum nonetheless contains an important element of truth—and one that looms large in the story of regulation and deregulation in America.

As scholars of political economy quietly shifted their focus from market failure to government failure over the second half of the twentieth century, they set the stage for a revolution in both government and markets, the full ramifications of which are still only beginning to be understood. This intellectual sea change generated some positive effects, but also, it seems, some negative ones. Today, the need for new regulation, particularly in the wake of the financial crisis, may require another fundamental shift in academic thinking about the role of government.

This essay begins with two stories: one about events (including financial crises and regulation) and the other about ideas (especially the shift in focus from market failure to government failure). Understanding the interplay between these two stories is essential for understanding not only the recent crisis but also what needs to be done, both politically and intellectually, to prevent another one. Meaningful policy reform is essential, but so too is a new orientation in scholarly research. This shift will require nothing less than a reversal of the prevailing null hypothesis in the study of political economy. I discuss what this “prevailing null” is—and what it needs to be—in the second half of the essay. First, though, two stories lay the foundation for what comes next.

THE RISE AND FALL OF FINANCIAL REGULATION IN THE UNITED STATES

The first story—about events—begins with a long series of financial crises that punctuated American history up through 1933.3 Starting when George Washington was president, major panics struck in 1792, 1797, 1819, 1837, 1857, 1873, 1893, 1907, and 1929–1933. Although lawmakers responded with a broad range of policies, from state banking and insurance regulation throughout the nineteenth century to the creation of the Federal Reserve in the early twentieth, none of these reforms succeeded in eliminating financial panics.

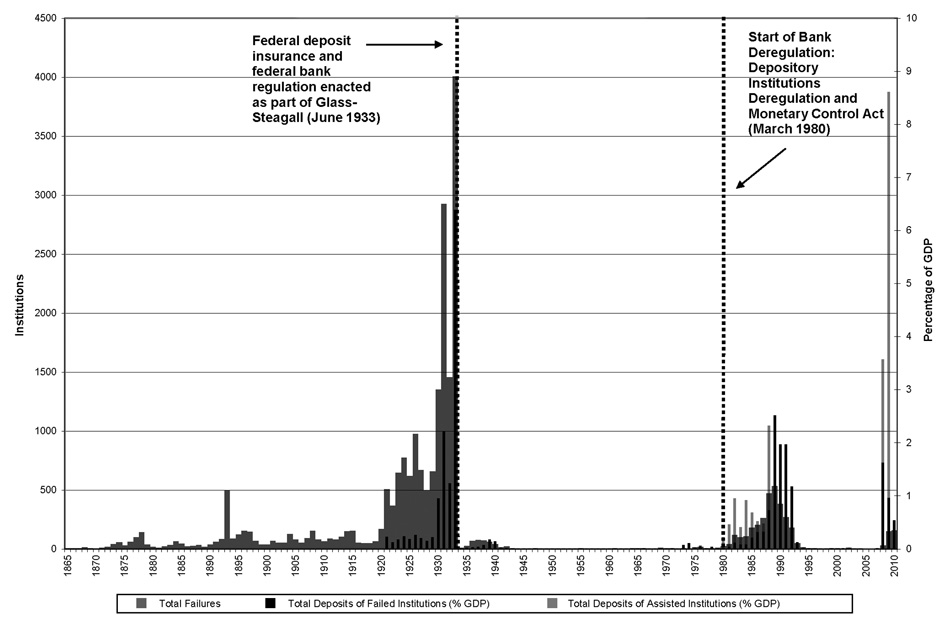

Only with the adoption of New Deal financial regulation (including the Banking Acts of 1933 and 1935, the Securities Exchange Act of 1934, and the Investment Company Act of 1940) did the United States enjoy a long respite from further panics. In fact, Americans did not face another significant financial crisis for about fifty years, which represented by far the longest stretch of financial stability in the nation’s history (see Figure 1). Importantly, this was also a period of significant financial innovation, with U.S. financial institutions—from investment banks to venture capital firms—quickly becoming the envy of the world.

Figure 1: A Unique Period of Calm amid the Storm: Bank Failures and Suspensions, 1865 to 2010

Data on deposits begin in 1921. This chart, prepared with the assistance of Darin Christensen, is based on an earlier version in David A. Moss, “An Ounce of Prevention: Financial Regulation, Moral Hazard, and the End of ‘Too Big to Fail,’” Harvard Magazine, September–October 2009. Source: Historical Statistics of the United States: Colonial Times to 1970, Series X-741, 8 (Washington, D.C.: Government Printing Office, 1975), 1038; “Federal Deposit Insurance Corporation Failures and Assistance Transactions: United States and Other Areas,” Table BF01, http://www2.fdic.gov/hsob; Richard Sutch, “Gross Domestic Product: 1790–2002,” in Historical Statistics of the United States, Earliest Times to the Present: Millennial Edition, ed. Susan B. Carter et al. (New York: Cambridge University Press, 2006), Table Ca9-19.

One reason the American financial system performed so well over these years is that financial regulators were guided by a smart regulatory strategy, which focused aggressively on the greatest systemic threat of the time, the commercial banks, while allowing a relatively lighter regulatory touch elsewhere. This approach made sense because most financial crises up through the Depression were essentially banking crises. As a result, the dual strategy of tough regulation (and insurance) of the commercial banks along with lighter (more disclosure-based) regulation of the rest of the financial system helped ensure both stability and innovation—a precious combination. Notably, the strategy was not devised by any one person or group, but rather arose out of the workings of the American political system itself, which required continual compromise and accommodation. In the end, the regulatory strategy appears to have worked, helping produce a long “golden era” of financial stability and innovation in America that lasted through much of the twentieth century.

This unprecedented period was dramatically interrupted following a new experiment in financial deregulation, commencing with passage of the Depository Institutions Deregulation and Monetary Control Act of 1980 and the Garn-St. Germain Depository Institutions Act of 1982. Before long, the nation faced a sharp increase in failures at federally insured depository institutions, including both commercial banks and savings and loans, an episode commonly known as the S&L crisis. Although a degree of re-regulation, enacted as part of the Financial Institutions Reform, Recovery and Enforcement Act of 1989 (FIRREA), proved useful in putting out the S&L fire, the broader movement for financial deregulation continued through the early years of the twenty-first century. Particularly notable were passage of the Gramm-Leach-Bliley Act of 1999, which repealed the Glass-Steagall separation of commercial from investment banking; the Commodity Futures Modernization Act of 2000, which prohibited the Commodity Futures Trading Commission and the Securities and Exchange Commission (SEC) from regulating most over-the-counter derivatives; and the SEC’s 2004 decision, driven in large part by Gramm-Leach-Bliley, to allow the largest investment banks to submit to voluntary regulation with regard to leverage and other prudential requirements.4

Although certain deregulatory initiatives may have contributed to the financial crisis of 2007 to 2009, more important was a broader deregulatory mindset that impeded the development of effective regulatory responses to changing financial conditions. In particular, the explosive growth of major financial institutions, including many outside of the commercial banking sector, appears to have generated dangerous new sources of systemic risk.

Among the nation’s security brokers and dealers, for example, total assets increased from $45 billion (1.6 percent of GDP) in 1980 to $262 billion (4.5 percent of GDP) in 1990, to more than $3 trillion (22 percent of GDP) in 2007.5 Many individual institutions followed the same pattern, including Bear Stearns, the first major investment bank to collapse in the crisis, whose assets had surged more than tenfold from about $35 billion in 1990 to nearly $400 billion at the start of 2007.6

Undoubtedly, the nation’s supersized financial institutions—from Bear Stearns and Lehman Brothers to Citigroup and AIG—played a central role in the crisis. They proved pivotal not only in inflating the bubble on the way up but also in driving the panic on the way down. As asset prices started to fall as a result of the subprime mess, many of these huge (and hugely leveraged) financial firms had no choice but to liquidate assets on a massive scale to keep their already thin capital base from vanishing altogether. Unfortunately, their selling only intensified the crisis and, in turn, their balance sheet problems. Had the terrifying downward spiral not been stabilized through aggressive federal action, the nation’s financial system might have collapsed altogether.

Given the enormous systemic risk posed by the supersized financial institutions, federal officials felt they had little choice but to bail out many of them—ironically, the very firms that had helped cause the crisis in the first place. The failure of Lehman in September 2008, and the severe financial turmoil that ensued, demonstrated just how much systemic damage one of these financial behemoths could inflict if allowed to collapse.

Clearly, the nation’s largest and most interconnected financial institutions had become major sources of systemic risk, even though many of them (including Bear Stearns, Lehman, Fannie Mae, and so forth) operated entirely outside of commercial banking. Had we updated our original (1933 to 1940) regulatory strategy in the 1990s or early 2000s to account for these new sources of systemic risk, meaningful regulation of the largest and most systemically significant financial institutions (including, potentially, tough leverage and liquidity requirements) would have ensued. Indeed, had such regulation been developed and enforced, the worst of the crisis might well have been avoided. But, regrettably, there was simply no appetite for devising new financial regulation at that time given the pervasive belief that private actors could most effectively manage financial risks on their own, without interference from government regulators.7

The problem, therefore, was not so much deregulation per se, but rather a deregulatory mindset that hampered the development of new regulation that, in retrospect, was desperately needed to address the emergence of new systemic threats in the financial sector. The intellectual sources of this deregulatory mindset long predated the crisis. Indeed, they are part of a second story—one focused on the development and transformation of ideas—which is the subject of the next section.

FROM MARKET FAILURE TO GOVERNMENT FAILURE: REVERSING THE NULL

Within the academy, ideas about the proper role of government in the economy were turned almost completely upside down over the course of the twentieth century. Until at least the 1960s, economists devoted particular attention to the problem of market failure, rejecting the notion that free markets always optimized social welfare, and believing that well-conceived government intervention could generally fix these failures in the private marketplace. By the 1970s, however, cutting-edge scholarship in both economics and political science increasingly spotlighted the problem of government failure. Even if markets sometimes failed, these new studies suggested, public intervention was unlikely to remedy market deficiencies because government failure (it was often claimed) was far more common and severe.

In a sense, what had changed was the prevailing null hypothesis in the study of government and markets.8 If students of market failure were responding to—and rejecting—the null that markets are perfect, then students of government failure were now responding to a new null—that government is perfect—and doing their best to reject it. This transformation (or, loosely, reversal) of the prevailing null hypothesis would fundamentally reshape academic research on the role of the state, encouraging scholars over the last third of the twentieth century to focus relentlessly on both the theory and practice of government dysfunction.9 When President Ronald Reagan announced in his first inaugural address in 1981 that “government is not the solution to our problem; government is the problem,” his approach was entirely consistent with a new generation of scholarship on government failure.

Market Failure

Although the first relevant use of the term “market failure” dates to 1958, when Francis Bator published “The Anatomy of Market Failure” in The Quarterly Journal of Economics, the broader notion that private market activity might not always maximize social welfare goes back considerably further. Perhaps the earliest expression of the externality concept should be credited to Henry Sidgwick, who observed in The Principles of Political Economy (1887) that individual and social utility could potentially diverge. A generation later, another British economist, Arthur Cecil Pigou, developed the idea further, first in Wealth and Welfare (1912) and subsequently in The Economics of Welfare (1920, 1932). Indeed, because Pigou was the first to suggest that a well-targeted tax could be used to internalize an externality, such an instrument is still known among economists as a Pigouvian tax.10

Market failure theory continued to develop over the course of the twentieth century. In addition to externalities and public goods, economists identified and formalized numerous other potential sources of market failure, including asymmetric information (especially adverse selection and moral hazard) and imperfect competition (such as monopoly, oligopoly, and monopsony).11

Across nearly all of this work on market failure was the underlying idea that good public policy could remedy market deficiencies and thus improve on market outcomes, enhancing efficiency and, in turn, overall social welfare. At about the same time that Pigou was conceiving of his internalizing tax in Britain, reform-minded economists in America were claiming that social insurance could be used to minimize industrial hazards (such as workplace accidents and even unemployment) by internalizing the cost on employers. As John Commons explained in 1919 regarding newly enacted workers’ compensation laws (which required employers to compensate the victims of workplace accidents), “We tax [the employer’s] accidents, and he puts his best abilities in to get rid of them.”12 Pigou himself endorsed a tax on gasoline that covered the cost of wear and tear on the roads, and even the playwright George Bernard Shaw suggested in 1904 that the “drink trade” be “debited with what it costs in disablement, inefficiency, illness, and crime.”13 Beyond Pigouvian taxes, the identification of market failures has been used to justify a wide range of economic policies. These policies include, among many other examples, public spending on education, national defense, and public parks (to support classic public goods); environmental regulation (to limit environmental externalities, such as pollution); and compulsory insurance, such as Medicare (to address the problem of adverse selection in private insurance markets). In fact, market failure theory is among the most powerful intellectual rationales—some would say, the most powerful rationale—for government intervention in the marketplace.

Government Failure

The problem, of course, is that there is no guarantee that government (and the political system that drives it) has the capacity to identify and fix market failures in anything close to an optimal manner. Beginning as early as the late 1940s, various strands of research began raising significant questions about the integrity and rationality of democratic decision-making, about the legitimacy and efficacy of majoritarian politics, about the attentiveness and knowledge-base of voters, and about the public mindedness of lawmakers and civil servants. Methodologically, what nearly all these strands of research had in common was the application of basic tools of economic analysis to the study of politics and public decision-making.

Just two years after Duncan Black’s landmark 1948 paper in the Journal of Political Economy introduced the median voter hypothesis, Kenneth Arrow published in the same journal a statement of his impossibility theorem, which proved that there exists no system of voting that can reflect ranked voter preferences while meeting even basic rationality criteria (such as the requirement that if every voter prefers A to B, the electoral outcome will always favor A over B, regardless of the existence of alternative choice C). Seven years later, in 1957, Anthony Downs’s An Economic Theory of Democracy pointed to the so-called rational ignorance of voters. James Buchanan and Gordon Tullock followed in 1962 with The Calculus of Consent, which offered a profound economic analysis of collective decision-making, including a strong critique of majoritarianism. Rounding out the foundational texts of public choice theory, Mancur Olson published The Logic of Collective Action in 1965, highlighting in particular the power of special interests to exploit opportunities involving concentrated benefits and diffuse costs.14

Meanwhile, a closely related body of thought, which also tended to venerate the market, was beginning to take shape at the University of Chicago. In “The Problem of Social Cost,” published in The Journal of Law & Economics in 1960, Ronald Coase (who was then at the University of Virginia but would soon move to Chicago) showed that externalities could be eliminated through trade in the absence of transaction costs. After apparently being the first to label Coase’s contribution the “Coase Theorem,” George Stigler launched a bold new approach to the study of regulation with his 1971 paper, “A Theory of Economic Regulation.” Stigler’s paper formalized the notion of regulatory capture (which grew out of rent-seeking on the part of both interest groups and regulators) and inaugurated what would ultimately become known as the Economic Theory of Regulation. Milton Friedman, meanwhile, had published Capitalism and Freedom in 1962, arguing that to best guarantee political freedom, private economic activity should be left to the market and insulated from government intervention. Government involvement in economic matters, he maintained, not only violated freedom through coercion but also generally spawned severe unintended consequences.15

By the mid- to late 1970s, the study of government failure, in all its various forms, had come to rival—and, in terms of novelty and energy, perhaps had even overtaken—the study of market failure in the social sciences. Indeed, Charles Wolf’s synthesis, “A Theory of Nonmarket Failure,” published in The Journal of Law & Economics in 1979, reflected the field’s coming of age.16 “The principal rationale for public policy intervention,” Wolf wrote, “lies in the inadequacies of market outcomes. Yet this rationale is really only a necessary, not a sufficient, condition for policy formulation. Policy formulation properly requires that the realized inadequacies of market outcomes be compared with the potential inadequacies of nonmarket efforts to ameliorate them.”17 Ironically, as Wolf points out in a footnote, one of the earliest articulations of this insight about the potential for government failure belongs to Henry Sidgwick, who was also perhaps the earliest contributor to the theory of market failure. “It does not follow,” Sidgwick wrote in his 1887 Principles of Political Economy, “that whenever laissez faire falls short government interference is expedient; since the inevitable drawbacks of the latter may, in any particular case, be worse than the shortcomings of private enterprise.”18

A Distorted Picture of Government?

Certainly, the study of governmental failure has advanced our understanding of both the logic and limits of government involvement in the economy, including government efforts to remedy market failure. But just as the study of market failure in isolation may produce a distorted picture of government’s capacity to solve problems, so too may an excessively narrow focus on government failure produce an exaggerated picture of government’s incapacity to do almost anything constructive at all.

As studies of government dysfunction and democratic deficiency have piled up over the past several decades, cataloguing countless theories and examples of capture, rent-seeking, and voter deficiencies, consumers of this literature could be forgiven for concluding that democratic governance can do nothing right. It would be as if health researchers studied nothing but medical failure, including medical accidents, negligence, and fraud. Even if each individual study were entirely accurate, the sum total of this work would be dangerously misleading, conveying the mistaken impression that one should never go to the doctor or the hospital. Unfortunately, the same may be true of social science research on government failure. When carried to an extreme, it may leave the mistaken impression that we should never turn to the government to help address societal problems.

Such an impression is difficult to reconcile with practical experience. While no one would say that government regulation is perfect, who among us would prefer to live without many of the key regulatory agencies, such as the FDA, the EPA, the Federal Reserve, the FDIC, the FAA, or the NTSB?19 Naturally, each of these agencies falters from time to time and could be improved in countless ways; but what proportion of Americans would seriously say that the nation would be better off if they were dismantled altogether? To take just one example, imagine how it would feel getting on an airplane if there were no FAA or NTSB, and the responsibility for air safety were left entirely to the airlines themselves.

The bottom line is that government failure is likely far from absolute. Most of us probably appreciate that government regulators inspect our meat, check the safety and efficacy of our prescription drugs, and vigorously search for threats to the safety of our major modes of transportation. And yet, in the social sciences—and especially in economics and related fields—the appeal of continuing to bludgeon the government-is-perfect null remains remarkably strong, as does the influence of the resulting research.

While a good understanding of government failure is essential, it now seems that the original effort to correct the excessively rosy view of government associated with market failure theory has itself ended in overcorrection and distortion. In the face of the financial crisis of 2007 to 2009, the earlier drive to deregulate the financial markets, which was so profoundly influenced by the weight of academic opinion and the study of government failure, appears to stand as “Exhibit A” of just such an overcorrection and its tragic real-world consequences.20

THE CASE FOR REVERSING THE NULL, ONCE AGAIN

Today, in the wake of the most perilous financial crisis since 1933, there have been widespread calls for new regulation. I myself have put forward a proposal for identifying and regulating the most systemically dangerous financial institutions—those large and interconnected firms commonly characterized as “too big to fail.”21 A basic premise of my proposal is that New Deal financial regulatory reform worked, paving the way for a highly dynamic financial system that remained virtually crisis-free for a half-century. The essential strategy of those New Deal reforms was to aggressively regulate the greatest systemic threats of the time (especially those associated with commercial banking) while exercising a relatively lighter touch elsewhere. In this way, the regulatory reforms helped ensure both stability and innovation in the financial system.

Unfortunately, somewhere along the way, many observers (including many academics) began to take that stability for granted. Financial crises were now just a distant memory, and the link between their disappearance and the rise of New Deal regulation was largely forgotten. From there, it became all too easy to view financial regulation as a costly exercise with few, if any, benefits. Many existing regulations were soon weakened or removed, and still more troubling, policy-makers often refrained from introducing new regulations even as new systemic threats began to take shape.

One of the main goals of financial regulation going forward, therefore, must be to update our financial regulatory system to address these new systemic threats—especially the systemically dangerous financial institutions that played such a large role in driving the crisis.

Policy proposals like this one, however, will not be sufficient by themselves. If the post-crisis push for better regulation is to succeed over the long term, I believe it must become rooted in a new generation of research on the role of government. Our predecessors have taught us a great deal about market failure and government failure. Now the goal must be to figure out when government works best and why: that is, what distinguishes success from failure.

Take the problem of regulatory capture, for example. George Stigler and his successors modeled the phenomenon and highlighted numerous cases in which special interests appear to have captured regulators (and legislators). This represents a very important contribution. The question now is what comes next. Unless one believes that capture is absolute and immutable, affecting all policy-makers equally in all contexts, the next logical step would be to try to identify cases in which capture was relatively mild or nonexistent, and then to use the variance across cases to generate testable hypotheses about why some public policies, agencies, and officials are more vulnerable to capture than others.22 If we could gain a better understanding of what accounts for high versus low vulnerability, it might become possible to design public policies and regulatory structures with a relatively high degree of immunity to capture.

Such an approach would imply the need for a new null hypothesis in the study of government. If market failure theory grew out of a markets-are-perfect null, and the exploration of government failure grew out of a government-is-perfect null, then perhaps what is required now is a government-always-fails null. This would push researchers to examine whether (as some students of government failure seem to believe) government always fails and to look hard for cases of success to reject the null. The goal would not be simply to create a catalog of government successes, but ultimately to identify, with as much precision as possible, the essential conditions that separate success from failure.

Fortunately, some scholars have already begun moving in this direction. Dan Carpenter’s work on market-constituting regulation and Steven Croley’s on regulatory rule-making in the public interest are valuable cases in point.23 But much more is needed. To complement the rich work on government failure that has emerged over the past several decades, we need a similarly broad-based effort exploring what works in government, and why. It is time, in short, to reverse the null once again.

REGULATION, DEREGULATION, AND THE POWER OF IDEAS

The financial crisis of 2007 to 2009 exposed severe weaknesses in American financial regulation and, in turn, in our ideas about financial regulation and the role of government more broadly.

With regard to the regulation itself, we had no meaningful mechanism for monitoring and controlling new forms of systemic risk. Massive financial firms—the failure of any one of which could pose grave danger to the financial system—were allowed to take extraordinary risks during the boom years, with leverage ratios rising into the stratosphere. There was also far too little protection of the consumer borrower; and a number of particularly strategic players in the financial system (especially credit rating agencies) operated with virtually no oversight whatsoever. Some say the problem was deregulation itself, which dismantled critical protections. In my own work, I have placed greater emphasis on a deregulatory mindset that inhibited the development of new regulation—or the modernization of existing regulation—in response to changing financial conditions. Either way, it seems clear that our regulatory system failed us.

Looking forward, it is imperative that our policy-makers devise and implement effective systemic regulation. I have suggested particularly vigorous regulation of the most systemically dangerous financial firms, with the dual goal of making them safer and encouraging them to slim down.

But as we think about regulatory reform—including both enactment and implementation—it is important to diagnose correctly the causes not only of the financial crisis itself but also of the regulatory failure that paved the way for both the bubble and the subsequent crash. Ironically, part of the explanation for the latter may relate to the growing focus within the academy on government failure, which helped convince policy-makers that regulation was generally counterproductive. The influence of the leading scholars in the field —concentrated especially at Chicago and Virginia—appears to have been quite large. It was no coincidence, for example, that President Reagan chose as his lead official on deregulation Christopher DeMuth, who had studied under both George Stigler and Milton Friedman at the University of Chicago. Not long after Stigler won the 1982 Nobel Prize in Economics, DeMuth characterized him as “the intellectual godfather of what we’re trying to do in the regulatory area.”24

In the specific domain of financial deregulation, general academic work on government failure combined with two additional influences, particularly in the 1990s and early 2000s, to help shape the worldview of numerous lawmakers and regulators. These influences were (1) a growing faith in the near-perfect efficiency of financial markets (the product of another successful field of academic research that was taken, perhaps, to an unreasonable extreme) and (2) a fading memory of the many crises that had punctuated American history before the adoption of New Deal financial regulation from 1933 to 1940. In tandem with these factors, the relentless academic focus on failure in the study of government, especially within the discipline of economics, may have fostered a distorted picture in which public policy could almost never be expected to serve the public interest.

Finally, then, if we are to introduce, implement, and sustain effective regulation—in the financial sector and elsewhere—we will need a new generation of academic research exploring the essential questions of when government works and why. Naturally, the question of what constitutes success within the policy arena will remain controversial. But at least it is a question that takes us in the right direction.

SUMMARY

After more than a quarter-century since George Stigler’s Nobel Prize (and just short of a quarter-century since James Buchanan’s), the field of public choice, with its intense focus on government failure, has emerged as perhaps the closest we have to an orthodoxy in political economy. Certainly, the discipline succeeded in changing the prevailing null hypothesis in the study of government from “markets are perfect” to “government is perfect,” leading throngs of researchers to search for signs of government failure in an effort to reject the government-is-perfect null. This work taught us a great deal about the potential limits of public policy. But just as early market failure theory may have left the mistaken impression that government could fix any market failure, the study of government failure may have left the equally mistaken impression that government can do nothing right.

If so, then it may be time to reverse the prevailing null hypothesis once again, adopting the most extreme conclusion of the government failure school—that government always fails—as the new null. From there, the goal would be to try to reject this new null, and most important, to try to identify conditions separating government success from failure. Although such a shift in academic focus could take root only gradually, it could eventually mark a vital complement to the new and more assertive regulatory policies that appear to be emerging in response to the crisis. Without an underlying change in ideas, new regulatory approaches will almost inevitably atrophy over time, leaving us in the same exposed position that got us into trouble in the lead-up to the recent crisis. Looking ahead, we can—and must—do better.

ENDNOTES

4. On the 2004 SEC decision, see Securities and Exchange Commission, “Chairman Cox Announces End of Consolidated Supervised Entities Program,” press release 2008-230, September 26, 2008, http://www.sec.gov/news/press/2008/2008-230.htm (accessed November 22, 2009).

5. Federal Reserve Board, “Flow of Funds Accounts of the United States, Historical,” Z.1 release,http://www.federalreserve.gov/releases/z1/Current/data.htm.

6. Data, courtesy of the New York Federal Reserve, are drawn from company 10-Qs.

7. Alan Greenspan, the former chairman of the Federal Reserve, acknowledged one aspect of this perspective in his now famous testimony before the House Committee on Oversight and Government Reform in October 2008. Greenspan stated, “[T]hose of us who have looked to the self-interest of lending institutions to protect shareholder’s equity (myself especially) are in a state of shocked disbelief.” See Testimony of Alan Greenspan, House Committee on Oversight and Government Reform, U.S. Congress, October 23, 2008, http://oversight.house.gov/images/stories/documents/20081023100438.pdf.